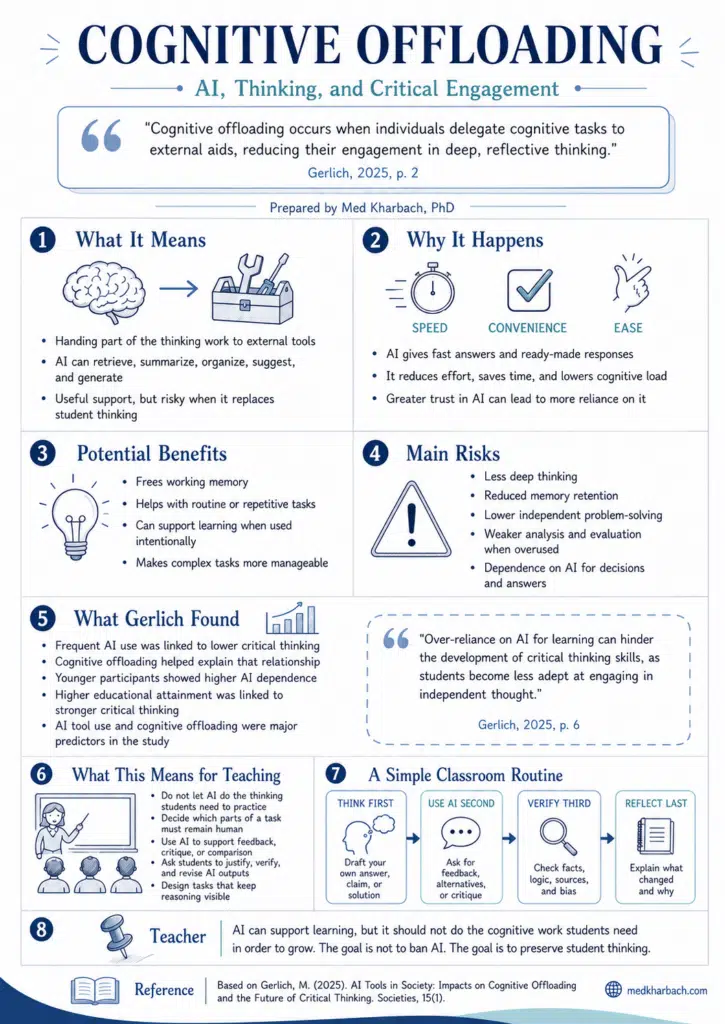

Few concepts have shaped the AI-in-education conversation as quickly as cognitive offloading. I’ve referenced Gerlich (2025) countless times, and it gets cited across the literature for a simple reason: it gives us measurable evidence for something most teachers already sense.

Students using AI heavily do less of the thinking work themselves. Today I built a sketchnote around the concept to make it easier to share, and I want to walk through what the research actually says and where the nuance lives.

The Concept in Gerlich’s Own Words

Gerlich defines cognitive offloading as the “externalisation of cognitive processes, often involving tools or external agents, such as notes, calculators, or digital tools like AI, to reduce cognitive load” (p. 3). He builds on Risko and Gilbert’s foundational 2016 work in cognitive psychology. The novelty is the empirical scale he brings to the question.

His sample is 666 UK adults across multiple age groups and education levels. Gerlich uses the Halpern Critical Thinking Assessment and Terenzini’s self-reported measures, then layers ANOVA, correlation, regression, and random forest regression on top. The results point in one direction.

AI tool use correlates strongly negatively with critical thinking (r = −0.68), and cognitive offloading is the mechanism. The mediation analysis is clear: AI use leads to offloading, offloading leads to weakened critical thinking. Participants aged 17 to 25 showed the highest AI dependence and the lowest critical thinking scores. Education level buffered the effect.

Not All Cognitive Offloading Is Bad

This is where teachers need to be careful when reading Gerlich. The interpretation needs to be steadier. Humans have been offloading cognition for centuries. A handwritten note, a shopping list, a calculator all do the same thing on a smaller scale, and we’ve never treated them as failures of human reasoning.

They’re sensible uses of external tools that free mental resources for harder thinking. The question isn’t whether offloading happens. It’s what we offload, and what we do with the freed-up bandwidth.

Gerlich himself acknowledges this. The danger isn’t the act of delegating a task to a tool. The danger is when offloading becomes the default approach to every cognitive challenge, when students stop attempting the problem and skip straight to the AI prompt. That’s the pattern his data show in the youngest group. They aren’t lazy. They’ve grown up with these tools and they reach for them automatically.

This connects to Fan et al.’s work on metacognitive laziness, which describes the same phenomenon from a different angle. Students using AI heavily during writing improved their essays but didn’t improve their underlying knowledge. AI did the lifting. Their cognition stayed parked.

Bastani et al. (2025) reported something similar in their guardrails study. Students using GPT without scaffolding produced better short-term work but performed worse when the tool was removed. Kosmyna et al. (2025) push the argument further with their concept of cognitive debt, where the brain pays a measurable price in EEG activity when ChatGPT does the thinking. The findings line up across multiple research programs.

What This Means for Teaching

The teaching response isn’t to ban AI. That ship has sailed, and banning AI doesn’t build the cognitive muscle anyway. The response is to be intentional about sequence and visibility.

The first move is sequence. Students should attempt the reasoning before AI comes into the work. An outline they wrote, a draft they struggled through, a problem they wrestled with for ten minutes. Then AI joins as a critic, a second pair of eyes, a thinking partner.

That sequence keeps the cognitive load on the student where it belongs and uses AI to extend the thinking. The student who outlines their argument before opening ChatGPT comes out of the work having actually argued. The student who starts with the AI prompt comes out with text but not thinking.

The second is visibility. Students should have to show what AI changed in their work and explain why. Disclosure isn’t a compliance ritual. It’s a metacognitive exercise. When students articulate which AI suggestions they accepted, which they rejected, and why, they’re doing the kind of reflective work Gerlich’s measures actually assess.

Gerlich’s study found that higher education levels buffered the negative effects of AI use. The work of teaching students to think didn’t lose its weight when AI arrived. If anything, it became the thing that protects them from the worst of it. Build the thinking habits first. The tools come second.