Most conversations about AI in education start with the tool. Which platform should we adopt? Should we allow ChatGPT? Those questions have a place, but they come too late in the planning process. The starting point should be pedagogy: what do I want my students to learn, and does AI help them get there? One framework I find particularly useful for this is backward design.

Backward Design: A Framework That Predates ChatGPT

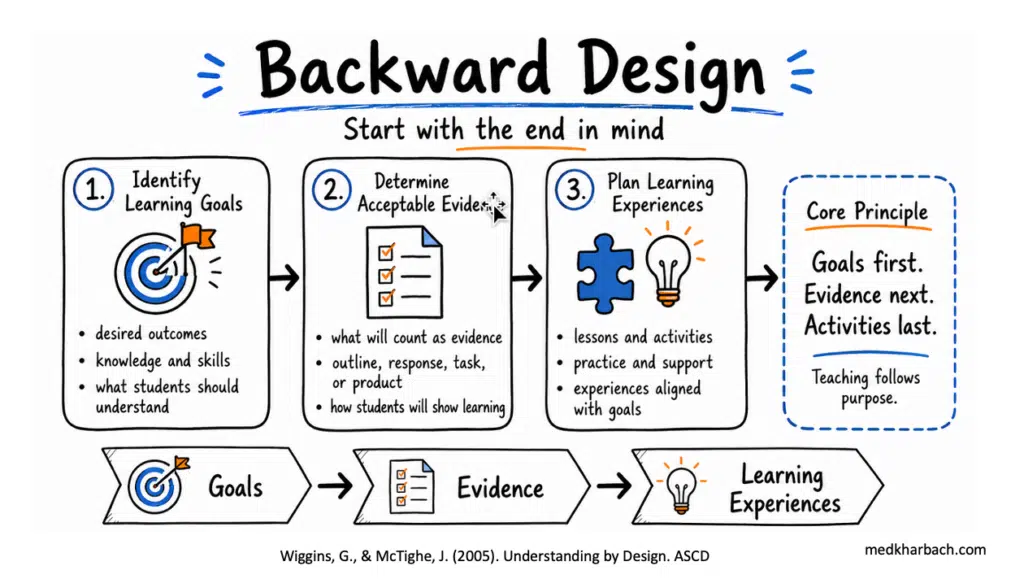

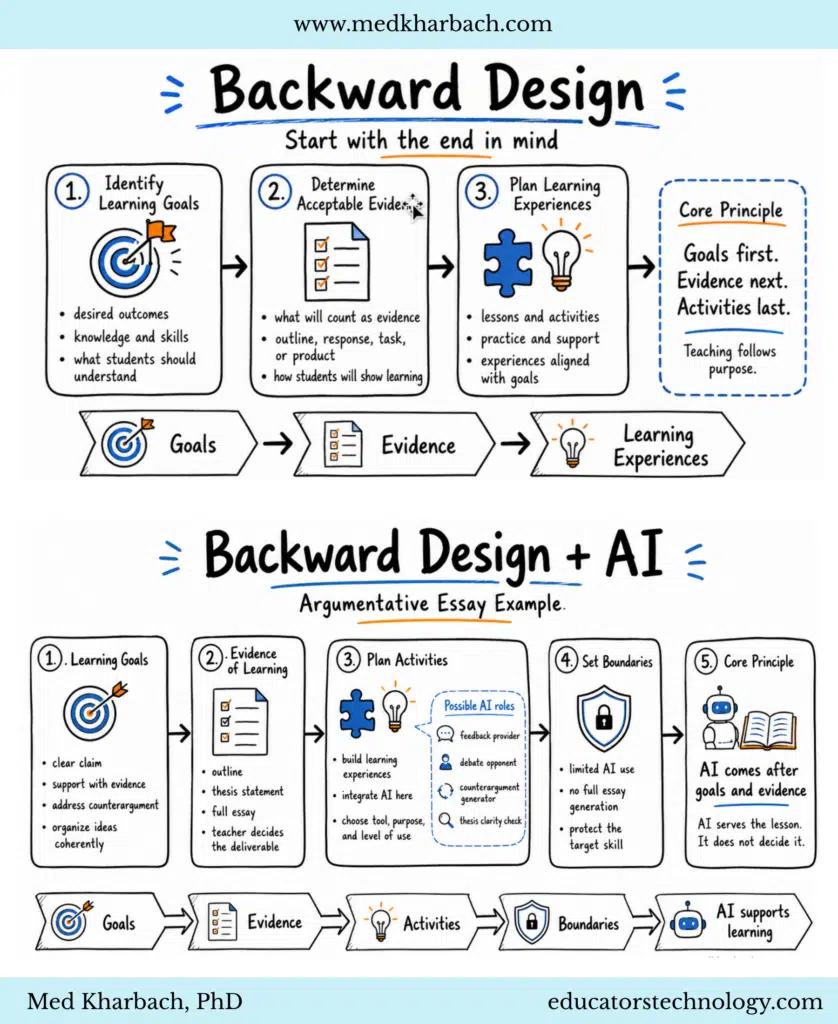

Wiggins and McTighe (2005) introduced backward design as part of their Understanding by Design framework. The core idea is straightforward: you don’t plan a lesson by picking activities first. You start with the end in mind and work backward through three stages.

You identify the learning goals first: what should students know, understand, and be able to do? Then you determine acceptable evidence, the kind of student work that proves they’ve met those goals. The last stage is planning the learning experiences, the activities, resources, and support that will help students get there.

It’s goals first, evidence next, and activities last.

This framework has been a staple of instructional design for two decades, and it applies directly to AI integration.

An Argumentative Essay Example

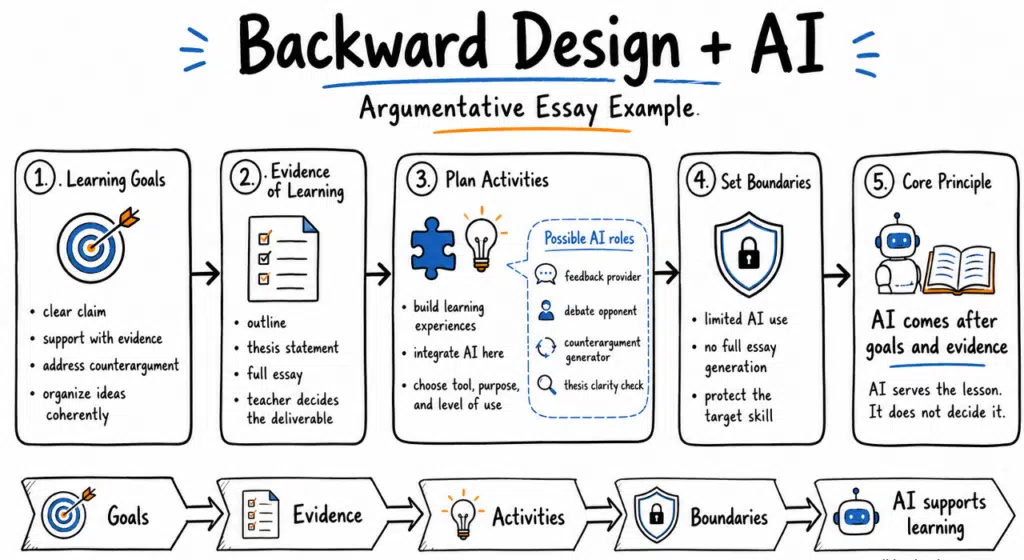

Let’s say I’m teaching argumentative writing. I define the learning goals: students need to develop a clear claim, support it with evidence, address counterarguments, and organize their writing coherently.

Then I ask what will count as evidence that students can actually do this. Will I collect an outline, a thesis statement, or the full essay? I pick the deliverable that makes student thinking visible.

Now I design the learning experiences, and this is where AI can play a role. I might allow students to use AI as a feedback provider on their draft structure, or as a debate opponent that stress-tests their claims. They could use a counterargument generator to challenge weak spots in their reasoning, or run their thesis through an AI clarity check. The tool, the purpose, and the level of use are all deliberate decisions tied to the learning goals I defined at the start.

The Step Most Frameworks Miss: Boundaries

If an activity warrants AI use, and not every activity does, then you need clear boundaries. Full essay generation, in this example, is off the table. It weakens the very skill the lesson is designed to develop.

This connects to a broader issue. Dawson, Bearman, Dollinger, and Boud (2024) argue that assessment validity is the bigger concern. When submitted work no longer provides reliable evidence of what a student knows and can do, the assessment has lost its purpose. That’s a design problem, not just an integrity problem.

Boundaries protect that validity. They keep AI in a supporting role and preserve the intellectual work the lesson is built around.

Start with the Right Question

Most AI integration conversations start with the tool. I’ve been in dozens of these discussions, and they almost always skip the foundational question.

The right starting point isn’t “Which AI tool should I use?” It’s “What do I want my students to learn, and does AI help them get there?”

If the answer is yes, bring it in with purpose and set the boundaries. If the answer is no, leave it out. The lesson doesn’t need AI to be effective. It needs clear goals, strong evidence design, and thoughtful learning experiences. AI is one piece of that sequence.

Goals → Evidence → Activities → Boundaries → AI supports learning.

References

- Dawson, P., Bearman, M., Dollinger, M., & Boud, D. (2024). Validity matters more than cheating. Assessment & Evaluation in Higher Education, 49(7), 1005-1016.

- Wiggins, G., & McTighe, J. (2005). Understanding by design. ASCD.