Most classrooms that use AI in 2026 are teaching students how to use it. Fewer are teaching students how to question it. There’s a significant gap between a student who can write a good prompt and a student who can look at the output and ask: whose perspective shaped this? What did I already know before I asked? Would this answer change if the question were asked in Arabic or Swahili?

That gap is the space between AI literacy and critical AI literacy, and it’s where the real educational work needs to happen.

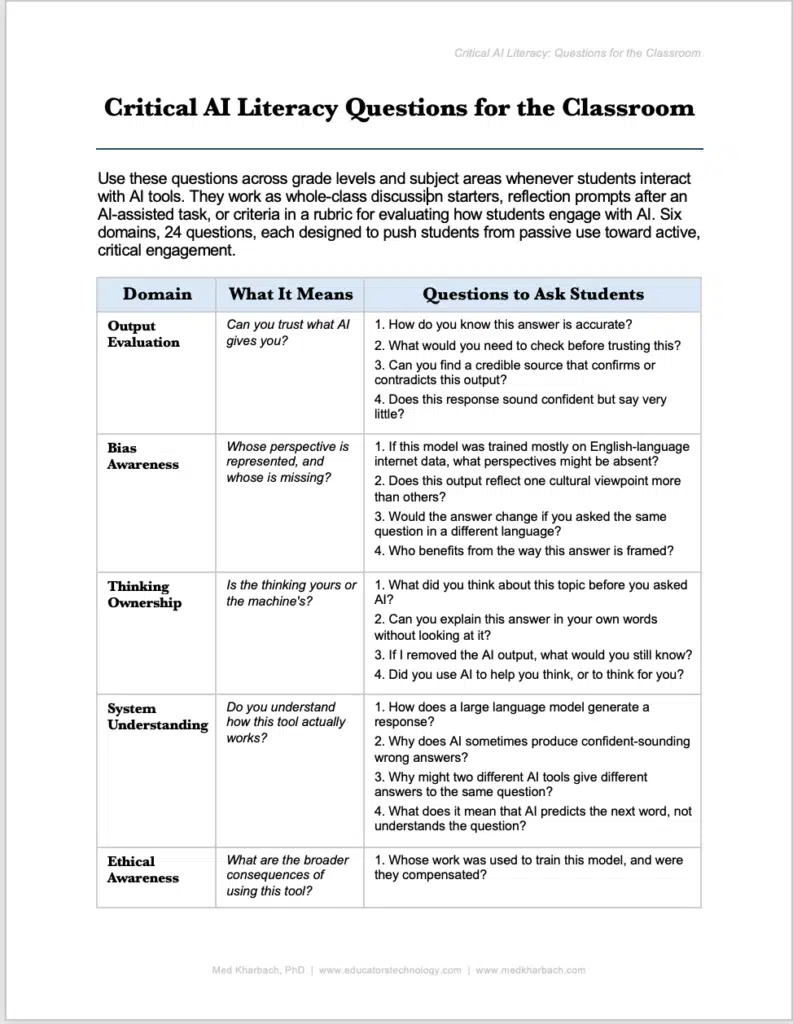

I put together a framework of 24 questions organized across six domains, designed for teachers who want to move their students from passive AI use toward active, critical engagement. These questions are part of a larger guide I recently published called Critical AI Literacy: A Short Guide for Teachers, which includes research-based definitions, a comparison framework, and practical implementation strategies. You can download the full guide [here].

The questions work as whole-class discussion starters, reflection prompts after an AI-assisted task, or criteria in a rubric. They travel across subjects and grade levels because they target thinking habits, not specific tools.

These questions are available in PDF form to use in your class!

The Six Domains

Each domain asks a different foundational question about how students relate to AI.

Output Evaluation: Can You Trust What AI Gives You?

This is where most teachers start, and it’s the right starting point. Students need to learn that AI-generated responses can sound polished and authoritative and still say very little of substance. The questions here push students to verify, cross-reference, and develop skepticism. “Does this response sound confident but say very little?” is a question that teaches a transferable skill.

It applies to AI outputs, but it also applies to news articles, political speeches, and social media posts. The research on AI hallucination makes this domain urgent. Models generate statistically plausible text, not verified facts, and students who don’t understand that difference are absorbing misinformation wrapped in fluent prose.

Bias Awareness: Whose Perspective Is Represented, and Whose Is Missing?

This domain gets at something most AI literacy curricula skip entirely. A question like “If this model was trained mostly on English-language internet data, what perspectives might be absent?” asks students to think about the dataset behind the tool, not just the output in front of them. Another question, “Who benefits from the way this answer is framed?”, introduces the idea that AI outputs aren’t neutral.

They reflect the data they were trained on, the choices their developers made, and the commercial interests that funded them. I’ve covered Roe, Furze, and Perkins’ (2025) “digital plastic” metaphor on this blog, and their argument that AI outputs should be treated as raw material to be reshaped and questioned connects directly to what this domain asks students to do.

Thinking Ownership: Is the Thinking Yours or the Machine’s?

This is the domain I care about most, and it’s the one that connects to the growing body of research on cognitive offloading. “What did you think about this topic before you asked AI?” forces students to recognize their own prior knowledge before an AI tool floods the space with its response. “Did you use AI to help you think, or to think for you?” is the question that separates productive AI use from intellectual dependency.

Gerlich’s (2025) research on cognitive offloading found that heavy AI reliance correlated with reduced critical thinking scores. Shaw and Nave (2026) coined the term “cognitive surrender” for the same phenomenon. These aren’t abstract concerns. They describe what happens when students stop doing their own cognitive work because a tool does it faster. The questions in this domain make thinking ownership visible and measurable.

System Understanding: Do You Understand How This Tool Actually Works?

Students don’t need a computer science degree to use AI critically, but they need a basic mental model of what’s happening when they type a prompt. “What does it mean that AI predicts the next word, not understands the question?” is a question that reframes the entire relationship between the student and the tool.

Once a student understands that the model is generating statistically probable sequences, not reasoning through a problem, they approach the output differently. They stop treating it as an authority and start treating it as a draft. Chee, Ahn, and Lee’s (2025) AI literacy competency framework includes technical understanding as a foundation for critical engagement, and I agree with that sequencing. You can’t question a system you don’t understand at all.

Ethical Awareness: What Are the Broader Consequences of Using This Tool?

“Whose work was used to train this model, and were they compensated?” is a question most adults can’t answer confidently, let alone students. But asking it opens a conversation about labor, intellectual property, and the economics of AI that students deserve to have. The environmental cost question matters too. Running large language models at scale has real energy and resource implications, and students who use these tools daily should know that.

Strategic Use: Are You Using AI Intentionally?

The final domain is about intentionality. “What is the learning goal of this task, and does using AI support or undermine it?” is the single most important question a student can ask before opening any AI tool. If the goal is to develop your own argument and you let AI write it, you haven’t used AI strategically. You’ve skipped the learning. “Could you have done the difficult thinking first and used AI to refine it?” reframes AI as a revision tool, not a drafting tool, and that reframing changes everything about how students approach their work.

How to Use These Questions

You don’t need to use all 24 at once. Pick two or three before an AI-assisted task and share them as thinking prompts. Use them as reflection questions afterward. Build them into your rubrics. The point is repetition: students develop critical AI literacy the same way they develop any other literacy, by practicing the questions until they become automatic.

I also recently shared a guide on critical thinking activities for the classroom that serves as a natural companion to this framework. The habits of mind are the same: questioning sources, evaluating evidence, identifying assumptions. The only difference is the object being questioned.

These 24 questions won’t solve every challenge AI brings to the classroom. But they’ll change the conversation from “how do I use this tool?” to “what is this tool doing to my thinking?” That’s where the real work begins.

References

- Chee, H., Ahn, S., & Lee, J. (2025). A competency framework for AI literacy: Variations by different learner groups and an implied learning pathway. British Journal of Educational Technology, 56, 2146-2182. https://doi.org/10.1111/bjet.13556

- Gerlich, M. (2025). AI tools in society: Impacts on cognitive offloading and the future of critical thinking. Societies, 15(1), Article 6. https://doi.org/10.3390/soc15010006

- Roe, J., Furze, L., & Perkins, M. (2024). Funhouse mirror or echo chamber? A methodological approach to teaching critical AI literacy through metaphors (arXiv:2411.14730). arXiv. https:// doi.org/10.48550/arXiv.2411.14730

- Roe, J., Furze, L., & Perkins, M. (2025): Digital plastic: a metaphorical framework for Critical AI Literacy in the multiliteracies era, Pedagogies: An International. Journal, https://doi.org/10.1080/1554480X.2025.2557491

- Shaw, S. D., & Nave, G. (2026). Thinking fast, slow, and artificial: How AI is reshaping human reasoning and the rise of cognitive surrender. Working paper, The Wharton School, University of Pennsylvania. https://papers.ssrn.com/sol3/papers.cfm?abstract_id=6097646

- U.S. Department of Education, Office of Educational Technology. (2024). Empowering education leaders: A toolkit for safe, ethical, and equitable AI integration. U.S. Department of Education. https://files.eric.ed.gov/fulltext/ED661924.pdf